What is a search engine?

A search engine is a web-based tool that enables users to locate information on the World Wide Web. Popular examples of search engines are Google, Yahoo!, and MSN Search. Search engines utilize automated software applications (referred to as robots, bots, or spiders) that travel along the Web, following links from page to page, site to site. The information gathered by the spiders is used to create a searchable index of the Web.

How search engines work

The process in which search engines work consists of these main steps:

- Crawling

- Indexing

- Picking the results

…and finally, showing the search results to the user.

What is search engine crawling?

Crawling is the discovery process in which search engines send out a team of robots (known as crawlers or spiders) to find new and updated content. Content can vary — it could be a webpage, an image, a video, a PDF, etc. — but regardless of the format, content is discovered by links.

Googlebot starts out by fetching a few web pages, and then follows the links on those webpages to find new URLs. By hopping along this path of links, the crawler is able to find new content and add it to their index called Caffeine — a massive database of discovered URLs — to later be retrieved when a searcher is seeking information that the content on that URL is a good match for.

What is a search engine index?

Search engines process and store information they find in an index, a huge database of all the content they’ve discovered and deem good enough to serve up to searchers.

Search engine ranking

When someone performs a search, search engines scour their index for highly relevant content and then orders that content in the hopes of solving the searcher’s query. This ordering of search results by relevance is known as ranking. In general, you can assume that the higher a website is ranked, the more relevant the search engine believes that site is to the query.

It’s possible to block search engine crawlers from part or all of your site, or instruct search engines to avoid storing certain pages in their index. While there can be reasons for doing this, if you want your content found by searchers, you have to first make sure it’s accessible to crawlers and is indexable. Otherwise, it’s as good as invisible.

Most people think about making sure Google can find their important pages, but it’s easy to forget that there are likely pages you don’t want Googlebot to find. These might include things like old URLs that have thin content, duplicate URLs (such as sort-and-filter parameters for e-commerce), special promo code pages, staging or test pages, and so on.

To direct Googlebot away from certain pages and sections of your site, use robots.txt.

Robots.txt

Robots.txt files are located in the root directory of websites (ex. yourdomain.com/robots.txt) and suggest which parts of your site search engines should and shouldn’t crawl, as well as the speed at which they crawl your site, via specific robots.txt directives.

How Googlebot treats robots.txt files

- If Googlebot can’t find a robots.txt file for a site, it proceeds to crawl the site.

- If Googlebot finds a robots.txt file for a site, it will usually abide by the suggestions and proceed to crawl the site.

- If Googlebot encounters an error while trying to access a site’s robots.txt file and can’t determine if one exists or not, it won’t crawl the site.

Indexing: How do search engines interpret and store your pages?

Once you’ve ensured your site has been crawled, the next order of business is to make sure it can be indexed. That’s right — just because your site can be discovered and crawled by a search engine doesn’t necessarily mean that it will be stored in their index. In the previous section on crawling, we discussed how search engines discover your web pages. The index is where your discovered pages are stored. After a crawler finds a page, the search engine renders it just like a browser would. In the process of doing so, the search engine analyzes that page’s contents. All of that information is stored in its index.

Can I see how a Googlebot crawler sees my pages?

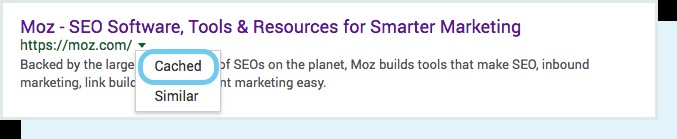

Yes, the cached version of your page will reflect a snapshot of the last time Googlebot crawled it.

Google crawls and caches web pages at different frequencies. More established, well-known sites that post frequently like https://www.nytimes.com will be crawled more frequently than the much-less-famous website for Roger the Mozbot’s side hustle, http://www.rogerlovescupcakes…. (if only it were real…)

You can view what your cached version of a page looks like by clicking the drop-down arrow next to the URL in the SERP and choosing “Cached”:

You can also view the text-only version of your site to determine if your important content is being crawled and cached effectively.

Are pages ever removed from the index?

Yes, pages can be removed from the index! Some of the main reasons why a URL might be removed include:

- The URL is returning a “not found” error (4XX) or server error (5XX) – This could be accidental (the page was moved and a 301 redirect was not set up) or intentional (the page was deleted and 404ed in order to get it removed from the index)

- The URL had a noindex meta tag added – This tag can be added by site owners to instruct the search engine to omit the page from its index.

- The URL has been manually penalized for violating the search engine’s Webmaster Guidelines and, as a result, was removed from the index.

- The URL has been blocked from crawling with the addition of a password required before visitors can access the page.

If you believe that a page on your website that was previously in Google’s index is no longer showing up, you can use the URL Inspection tool to learn the status of the page, or use Fetch as Google which has a “Request Indexing” feature to submit individual URLs to the index. (Bonus: GSC’s “fetch” tool also has a “render” option that allows you to see if there are any issues with how Google is interpreting your page).

Search engines how to index your site

Robots meta directives

Meta directives (or “meta tags”) are instructions you can give to search engines regarding how you want your web page to be treated.

You can tell search engine crawlers things like “do not index this page in search results” or “don’t pass any link equity to any on-page links”. These instructions are executed via Robots Meta Tags in the <head> of your HTML pages (most commonly used) or via the X-Robots-Tag in the HTTP header.

Robots meta tag

The robots meta tag can be used within the <head> of the HTML of your webpage. It can exclude all or specific search engines. The following are the most common meta directives, along with what situations you might apply them in.

index/noindex tells the engines whether the page should be crawled and kept in a search engines’ index for retrieval. If you opt to use “noindex,” you’re communicating to crawlers that you want the page excluded from search results. By default, search engines assume they can index all pages, so using the “index” value is unnecessary.

- When you might use: You might opt to mark a page as “noindex” if you’re trying to trim thin pages from Google’s index of your site (ex: user generated profile pages) but you still want them accessible to visitors.

follow/nofollow tells search engines whether links on the page should be followed or nofollowed. “Follow” results in bots following the links on your page and passing link equity through to those URLs. Or, if you elect to employ “nofollow,” the search engines will not follow or pass any link equity through to the links on the page. By default, all pages are assumed to have the “follow” attribute.

- When you might use: nofollow is often used together with noindex when you’re trying to prevent a page from being indexed as well as prevent the crawler from following links on the page.

noarchive is used to restrict search engines from saving a cached copy of the page. By default, the engines will maintain visible copies of all pages they have indexed, accessible to searchers through the cached link in the search results.

- When you might use: If you run an e-commerce site and your prices change regularly, you might consider the noarchive tag to prevent searchers from seeing outdated pricing.

Here’s an example of a meta robots noindex, nofollow tag:

<!DOCTYPE html><html><head><meta name="robots" content="noindex, nofollow" /></head><body>...</body></html>

This example excludes all search engines from indexing the page and from following any on-page links. If you want to exclude multiple crawlers, like googlebot and bing for example, it’s okay to use multiple robot exclusion tags.

X-Robots-Tag

The x-robots tag is used within the HTTP header of your URL, providing more flexibility and functionality than meta tags if you want to block search engines at scale because you can use regular expressions, block non-HTML files, and apply sitewide noindex tags.

For example, you could easily exclude entire folders or file types (like moz.com/no-bake/old-recipes-to-noindex):

<Files ~ “\/?no\-bake\/.*”> Header set X-Robots-Tag “noindex, nofollow”</Files>

The derivatives used in a robots meta tag can also be used in an X-Robots-Tag.

Or specific file types (like PDFs):

<Files ~ “\.pdf$”> Header set X-Robots-Tag “noindex, nofollow”</Files>

Ranking: How do search engines rank URLs?

How do search engines ensure that when someone types a query into the search bar, they get relevant results in return? That process is known as ranking, or the ordering of search results by most relevant to least relevant to a particular query.

To determine relevance, search engines use algorithms, a process or formula by which stored information is retrieved and ordered in meaningful ways. These algorithms have gone through many changes over the years in order to improve the quality of search results. Google, for example, makes algorithm adjustments every day — some of these updates are minor quality tweaks, whereas others are core/broad algorithm updates deployed to tackle a specific issue, like Penguin to tackle link spam. Check out our Google Algorithm Change History for a list of both confirmed and unconfirmed Google updates going back to the year 2000.

Why does the algorithm change so often? Is Google just trying to keep us on our toes? While Google doesn’t always reveal specifics as to why they do what they do, we do know that Google’s aim when making algorithm adjustments is to improve overall search quality. That’s why, in response to algorithm update questions, Google will answer with something along the lines of: “We’re making quality updates all the time.” This indicates that, if your site suffered after an algorithm adjustment, compare it against Google’s Quality Guidelines or Search Quality Rater Guidelines, both are very telling in terms of what search engines want.

Ranking factors

- Well-targeted content – you need to identify what people search for and create quality content tailored to their needs

- Crawlable website – this is a no brainer – if you want to rank, your website must be easy to find by search engines

- Quality and quantity of links – the more quality pages link to your website, the more authority you’ll have in the eyes of Google

- Content oriented at user intent – SEO is not only about what words you use, but also about the type of content and its comprehensiveness – make your visitor happy and Google will be happy too

- Unique content – be very careful about using duplicate content on your websites

- EAT: Expertise, Authority, Trust – the E-A-T signals are evaluated by Google’s Quality Raters – never forget to build and prove your expertise and trustworthiness and write only about topics you are qualified for

- Fresh content – some topics require more freshness than the others, but nonetheless, you should regularly update your content to keep it up to date

- Click-through rate – optimize your title tags and meta descriptions to improve the CTR of your pages

- Website speed – make sure your visitors don’t have to wait too long to load the page, otherwise, there’s a high chance they’ll leave before actually visiting it

- Works on any device – your website must work perfectly on any device and screen size (remember that the majority of internet users come through mobile devices!)

Other important factors that may have a positive impact on your rankings:

- Content depth

- Image optimization

- Topical authority

- A well-structured page

- Social sharing

- Use of HTTPS

What do search engines want?

Search engines have always wanted the same thing: to provide useful answers to searcher’s questions in the most helpful formats. If that’s true, then why does it appear that SEO is different now than in years past?

Think about it in terms of someone learning a new language.

At first, their understanding of the language is very rudimentary — “See Spot Run.” Over time, their understanding starts to deepen, and they learn semantics — the meaning behind language and the relationship between words and phrases. Eventually, with enough practice, the student knows the language well enough to even understand nuance, and is able to provide answers to even vague or incomplete questions.

When search engines were just beginning to learn our language, it was much easier to game the system by using tricks and tactics that actually go against quality guidelines. Take keyword stuffing, for example. If you wanted to rank for a particular keyword like “funny jokes,” you might add the words “funny jokes” a bunch of times onto your page, and make it bold, in hopes of boosting your ranking for that term:

Welcome to funny jokes! We tell the funniest jokes in the world. Funny jokes are fun and crazy. Your funny joke awaits. Sit back and read funny jokes because funny jokes can make you happy and funnier. Some funny favorite funny jokes.

This tactic made for terrible user experiences, and instead of laughing at funny jokes, people were bombarded by annoying, hard-to-read text. It may have worked in the past, but this is never what search engines wanted.